As I began reading The Black Swan, my wife wondered if the black swan was a dancer in swan lake. I’ll assure you, my reading hasn’t taken a turn into classical dancing. Instead, I’ve become intrigued by interesting events. Most of the time black swans are what the subtitle says: highly improbable. So The Black Swan: The Impact of the Highly Improbable is about the random events that aren’t probable, but have a major impact on the world – or your world. Those impacts can be positive or negative.

I wanted to learn more so that I could go black swan hunting. I wanted to go looking for black swans that I might be able to domesticate. I want to bring the positive impacts of the highly improbable to my world. However, before I could get to hunting black swans, I had to understand more about them.

Predicting the Unpredictable

A black swan, as Taleb defines it, is an unpredictable event. They are — by definition, unpredictable. It can’t be predicted. It’s not just difficult to predict, it’s impossible to predict. We’ve got all sorts of models which are designed to predict random events, but truly random events can’t be predicted – by definition. We can only predict pseudo-random events, and only when we know the genesis of the pseudo-randomness.

Inherent to accepting black swans is accepting that you will never be able to predict them. They’ll come in ways and at times that you’ll never expect. Our only choice is to raise our awareness so that we can discover them sooner and respond quicker. (I’m paraphrasing Richard Moon here; I quoted him in my post The Inner Game of Dialogue about the book Dialogue: The Art of Thinking Together).

Thinking for Survival

Most of our human existence, we’ve been focused on the short-term. We worried about water today, food tomorrow, shelter for the winter. We’ve been focused on our day-to-day existence enough that we didn’t have any opportunity to think towards the future. As humans have evolved, we’ve managed to create crops, and worked towards more planning and less day-to-day sustenance.

We’re continuing to seek ways to ensure our long-term survival. In the evolutionary blink of an eye, we’ve moved towards long-term planning. It’s in this long-term planning that we’ve begun to try to predict the future so that we could plan into the future. We’ve moved away from living hand-to-mouth.

We’ve become more than just a bit arrogant that we’re conquering our world by domesticating animals, and by leveraging the power of atomic energy to unleash massive destruction or to drive forward commerce. We believe that we have the ability to see the future and predict what will happen, despite nearly continuous evidence to the contrary.

Living in Indiana, I’ve several times noted that the state went bankrupt shortly after working on the canal system. The “Crossroads of America”, as Indiana is called, is a place where commerce transits. State leaders believed that a canal system would speed the transportation of goods through the state. Of course, this was correct. However, what wasn’t correct was that canals were going to remain the fastest (and cheapest) way to transport goods. Along came the railroad, and in an incredibly short time, everything was transported by rail and not by canal. (Examples of where I’ve mentioned this are The Challenger Sale

and

Extraordinary Minds.)

This is just one of millions of examples of how we can’t predict the future or the things that will disrupt our plans. So while we’ve started making our lives better through our thinking, we’re invariably wrong about the accuracy of our predictions. (See Thinking, Fast and Slow, Incognito, and Stumbling on Happiness for more on the accuracy of our predictions.)

Ignoring Black Swans

So what happens when you ignore black swans? Well, Everett Rogers described the folks that try as “laggards” in his book Diffusion of Innovations. Why is that? When innovations came to farming – as they did repeatedly during the middle of the 20th century – some farmers embraced the changes and tried to take advantage of the innovations, and others tried to ignore them. During the period from 1950 to 1970, the number of people fed per farmer moved from 14 to 47 – a more than threefold increase in the output of farmers. That’s great for the farmers who were on top of the innovations. However, it was a problem for those who didn’t keep pace.

From 1960-1970, the average price per bushel of wheat dropped from 1.76 to 1.33 – roughly 25% in 10 years. The effects of supply and demand pushed the price lower as there was a greater supply from more effective farmers. To the laggard, whose production wasn’t increasing, that means a 25% drop in income in 10 years.

Iowa farmers applied innovations and the results of others’ hard work to increase their yield and improve their ability to survive downturns. In fact, this period of rapid growth in farming created another unexpected (or at least partially unexpected) effect. Farmers had built an expectation of increasing production. When production sagged, and the market prices continued to settle in the 1980s, we saw a rise in foreclosures on farms. This trend spun songs like Rain on the Scarecrow and the rise of Farm Aid. We’ve continued to increase production, so now we’re feeding hundreds of people per farmer, but some of that is due to consolidation.

While earlier years had lower production numbers, the variability was very tight – so 30% drops in year-over-year production wasn’t an expected event. We know from systems thinking that, as you increase output, you reduce the number of stabilizing influences, and therefore create more variability. (See Thinking in Systems for more.) However, no one had ever seen such large variability in output before.

Prepared for Everything

The Boy Scout motto is “Be Prepared.” “Preppers” are preparing for the end of the world as we know it. Whether it’s a nuclear winter, a zombie apocalypse, or anarchy, they want to be ready for it. It means growing your own food and having stocks of anything you can’t produce yourself, including guns, ammunition, and the tools to reload rounds. Here’s the problem, you can’t be prepared for everything. Take a prepper and give them a scenario that’s never happened before and they quite literally can’t prepare for it.

Take the zombie apocalypse. How do you know how you’ll be able to defend your family? At what rate will the zombies come? If you shoot them, will they recover or not? (I mean heck, they’re already dead – it’s not like you can kill something that’s dead.) So, no matter what scenario that has never happened before, there will be some level of uncertainty as to what rules will and won’t apply. After all, during the riots in LA after the Rodney King verdict, people still parked inside the lines in parking lots – some rules are kept even in anarchy.

Lies, Damn Lies, and Statistics

Many years ago, before the habit of writing a book review for most of the books that I read, I read the book Lies, Damn Lies, and Statistics. After starting to write book reviews, I read How to Measure Anything. They’re neither one the hard-hitting statistical theory books that I might have to read for a college course in statistics, but they do lay out the basic foundations. I was always impressed at the elegance of the arguments. How to Measure Anything was particularly quick to point out that in statistics it’s very quick to reach a confidence range even with only a few data points. I found this very interesting and somewhat disturbing.

The arguments for things like Fermi estimates – where we can guess at the answers and be mostly right if we have enough people and we have experience with the answers—are valid. There are equally valid conserns, as the Drake Equation – which “calculates” the probability of life on other planets – points out. When we don’t have sufficient experience, there’s no converging on a solution. This is the heart of the black swan problem. How much experience is “enough” experience?

On September 10th, 2001 the probability of someone hijacking a plane and crashing it into a building to cause a catastrophic fault was effectively nil given what we knew. It had never been done before. No one had ever hijacked a plane and run it into a skyscraper. However, by the end of the horrific day that was September 11, 2001, we knew that someone hijacking a plane and crashing it into a building was not just possible, but had been done. We clearly didn’t have enough knowledge of terrorist activities on September 10th to accurately predict the future. That’s the point. You can’t predict a black swan – an unpredictable event.

Taleb was in the fortunate position of having just published Fooled by Randomness one week before the event of 9/11. He received phone calls and emails wondering how he had predicted the event. He hadn’t predicted it. He had devised a thought experiment that unfortunately coincided with an actual event that happened shortly after publishing. Hindsight made it appear that he had great insight into the event, when in reality it was just random happenstance.

Unlike the pristine bell curves and clean mathematics of statistics, the real world is filled with what Mandelbrot would call “roughness.” That is, the real world is so complex that we cannot see its complexity. We can’t say what constitutes enough evidence about the possible outcomes, because we’ve not seen them all. (See Fractal Along the Edges for more about Mandelbrot and roughness.)

What it Takes to Be Successful

Over the last few years, I’ve considered what it takes to be successful. There are the ideas of Malcolm Gladwell in Outliers (borrowed from Anders Ericsson – whose new book Peak I just reviewed) that it takes 10,000 hours of practice (though Ericsson never said this). At one level, the answer is to get into flow (See Flow and Finding Flow) and use that as the mechanism driving continuous improvement (See The Rise of Superman). This is the core of the Protestant work ethic. In essence, “work hard and God will reward you”.

However, the more I look at great leaders, the more I realize that the single biggest factor in their success isn’t inside of their control. The single biggest factor is luck. That is, a set of circumstances got created around them that they took advantage of, and the result was phenomenal success. To be clear, I’m not saying that they didn’t work to develop skills, they didn’t take risks, and they don’t deserve success. I’m saying that the key ingredient, and the one which they had no control over, was luck. There are pithy quotes about “luck is the residue of preparation” (Jack Youngblood) and “chance favors the prepared mind” (Louis Pasteur). However, these quotes seem empty and hollow next to the randomness that defines life.

I’m more and more impressed by the people that I meet who live in relative obscurity but who are just as wise as, and perhaps more powerful than, some of the people who have become famous for their work. I’m reminded of the number of people who became famous posthumously. I think that I’ve come to realize that some of the black swans are welcome, if not unexpected, guests. They’re the “luck” that moves people from the category of relative obscurity to the spotlight.

I often say to my friends that I don’t know what doors God is going to open, but I’m going to put my running shoes on while I wait. That is, I believe that there is truth in the pithy quotes in that you have to be prepared to take advantage of the lucky breaks that we get.

How Black Swans Change Your Course

If you believe that you can map out the course of your life from beginning to end without worrying about black swans or unexpected circumstances, then I wish you luck. The famous and successful folks I’ve spoken with or whose works I read have admitted that their best laid plans haven’t led them to where they are. They’re where they are by taking advantage of the opportunities in front of them. (Some of the most direct writing about this is in Extreme Productivity.)

If you look at my career – at its current state – it’s shaped substantially by Microsoft SharePoint. My consulting is focused around the product, though this wasn’t always the case. I did infrastructure consulting as well as software development before I started getting more focused on SharePoint. Even today, when my engagements are less about the technology and more about the organization, can be attributed to my experience with implementing SharePoint in technically beautiful ways, while also recognizing that adoption, training, and most importantly organizational change wasn’t done properly. That led me to my interest in organizational development, and how to get organizations to be more effective.

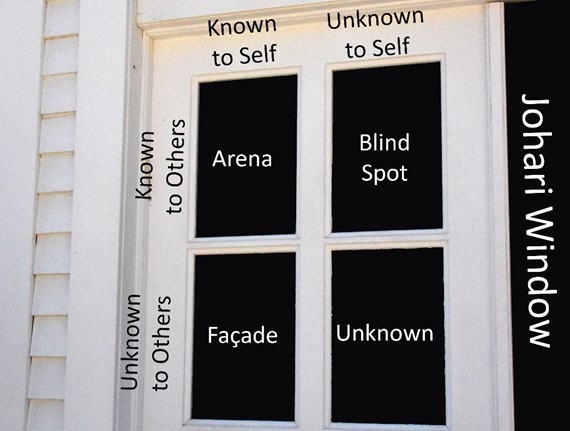

Johari Window

Black swans come through a specific spot in a window – the Johari window. It’s a technique to help people better understand themselves and others. It is primarily used in the context of helping someone – or a team – get better. On one dimension, there are others, and their “known” and “unknown”. The other dimension is self, again with known and unknown. So things that are known to others but not you are a blind spot. However, more interesting in this context, is that there is a spot where neither you nor others know about something. It’s an unknown-unknown. It’s a black swan.

If no one knows something, then how can it be predictable?

Discontinuity

I’ve been fascinated by the hydrodynamics of locks. They’re amazing feats of engineering. It’s interesting to see how boats are lifted and lowered by the power of water flowing from areas of higher potential energy to areas of lower potential energy.

Locks represent the place of discontinuity for the water. On one side it’s higher, and on the other it’s lower. In essence the water takes a jump – like a waterfall, but without the potentially disastrous effects of hitting the rocks.

We’re lulled into a sense of complacency that all things should be continuous. As you add more milk to the glass, it slowly increases. It doesn’t stay at one state and suddenly jump to full. However, the discontinuity we see is what a black swan does. Things are one way, then the next moment they’re radically different.

In Demand, there’s a discussion of Zip cars, and how membership jumped dramatically when the walk to get to a car was five minutes instead of ten minutes. This is a discontinuity. At one level of distance there are few subscriptions, and on the other level there are many.

Systems and Swans

Black swans are somewhat expected and they’re expected to be unexpected. In systems thinking (see Thinking in Systems), when you increase output you do so by reducing stocks (or buffers) and counterbalancing looks that help to recover the system to the “normal” state. The result is greater efficiency but less resiliency. That is, the system takes longer to recover from an imbalance, or it will suffer a catastrophic failure rather than return to the normal state.

Civilian-use airplanes are designed with something called dynamic stability. That means, all things being equal, they’ll try to go in a straight line, and generally speaking they’ll be in a slight climb. This is built into the design of the plane. It should naturally correct for a small amount of tilt to one side or the other, and should respond well to the pilot trying to obtain a straight and level flight. The pilot shouldn’t have to fight to get these relatively common effects. This is good because it reduces the pilot’s burden, and improves resilience. However, dynamic stability comes at a cost. The cost is that the plane isn’t 100% efficient going through the air.

Compare this with the newer aircraft that have been designed for military use, which have dynamic instability. That is, they don’t want to do a straight and level flight. It’s sort of like trying to balance on the top of a ball. The benefit of these designs is better performance characteristics of the aircraft are traded for more control inputs. The compensating system that is in place, which allows these aircraft to be flown (and even to do vertical takeoff), is that the control systems are very sophisticated. They differentiate between the unprovoked changes in the aircraft attitude, and those which are in response to the pilot’s controls. The systems are making minute changes to the actual control surfaces much faster than a pilot could possibly respond to create an overall aircraft that is still flyable.

I’ve seen some truly amazing control system compensations after a pilot lost a wing or had other substantial damage to the aircraft. It’s these scenes that make you wonder if the control systems managed to defy the laws of aerodynamics. However, what I haven’t seen – but know it happens – is a control system failure that leads to the loss of the aircraft. Whenever we try to reach the edge of performance, we interact with complicated systems, and ultimately we have very little ability to predict the outcomes. (See Diffusion of Innovations for our inability to predict the impact of an innovation.)

The problem with systems is that we rarely fully understand them and the various feedback loops that keep the system operational – even under extreme circumstances. So it’s one thing to talk about how we’re reducing resilience by increasing output because we’re removing or reengineering some of the feedback loops, but quite another to recognize that, the more that we optimize systems, the more likely it is that we’ll have an unexpected problem.

Consider for a moment the manufacturing shift to “just in time” inventory. This allows the buffers (stocks) of parts to be near zero by assuming predictability in the supply chain. The idea is that, if you know how much you’re going to consume (your daily usage), and you know how long it takes for a vendor to get you more (lag time), you can set the system up so that the materials arrive right as you need them. This works great as a way to reduce the cost of storage of the raw materials and lower capital requirements (due to the lower stocks of raw materials). This is a good thing – right up to the point where it isn’t.

In this same scenario, what happens when the supplier is late? Instead of depleting inventory, the entire production line shuts down waiting on the parts. My brother flew more than one box of parts in a charter aircraft just to keep production lines going.

Though the impact isn’t a black swan – because it can be predicted and can be planned for –it’s the unknown variants of this problem that we get when we tinker with systems that create new black swans.

Black Swan Hunting

For me, I like to go black swan hunting. Sure, I don’t find many. It feels like I fail a lot, but I’m in good company. The idea of an electric light was a black swan – a game-changing, discontinuity-generating discovery. That’s why I do things like the child safety cards that Terri and I created in our Kin-to-Kid Connection brand of products. That’s why we’re working on a handwashing kit. That’s why we’re (mostly she is) doing healthcare-associated infection consulting. That’s why I experiment with my solar powered mini-barn.

The heart of black swan hunting is trying new things. Whether it’s an experience that’s new to you that others have done, or something that no one has ever done, it’s in these explorations of new space that you find black swans.

Innovating and Black Swans

Saying I’m hunting black swans is a bit of a misnomer. I’m not looking for the negative events. I’m looking for the positive ones. The positive ones are, in my opinion, almost always an innovation. The negative black swans may be innovations too – but other people’s innovations – or they can be a collapse or discontinuity in the system. (See above)

In systems thinking, there’s the idea of bounded reality, which states the actors of the system function only to the level which they’re aware of their situation. It’s this bounded reality that leads to the problems like the Tragedy of Commons. Any system has the possibility of overconsuming the stock resources necessary to generate the new flow. (Again, see Thinking in Systems.)

Expecting and Accepting the Unpredictable

Taleb comments in a few places that folks find his perspective unique. On the one hand, he’s encouraging us to realize that there are unpredictable events that are coming, and on the other hand, he seems relatively unfazed by this reality. He doesn’t experience the persistent sense of paranoia that some might expect.

I believe the expectation that, if you acknowledge that there are unpredictable events, then you should be always fearful misses an important part of being happy. That is, it does no good to worry about things that you can’t change. If you can’t change it, prepare for it, or prevent it, why worry?

I’m not talking about ignoring the black swans once they appear. I’m not talking about denial. I’m talking about a decision to not fret about those things which aren’t under your control. There is a peace that comes through acceptance that you can’t be prepared for everything. All you can do is what you can do. This is about accepting your limitations and knowing that there are some events for which you’ll only have the chance to respond. The serenity prayer is keen to call us to accept the things we cannot change.

So we have to accept that black swan events do happen. We have to accept that we cannot fully prepare for them. However, if you want to understand more about how they function and the foolishness which is our belief in our predictions, a good place to start is by reading The Black Swan.

No comment yet, add your voice below!